August, 2020

At present, more than 2.5 quintillion bytes of data are generated every single day (Social media today). Brought into mainstream in 2020, data science is rated as one of the most interesting fields of all time. As a discipline that aims to harness big data, data science incorporates an array of scientific tools, sophisticated algorithms, processes, and knowledge extraction systems that can help in identifying meaningful patterns from structured and unstructured data.

The application of data science also extends to data mining as well.

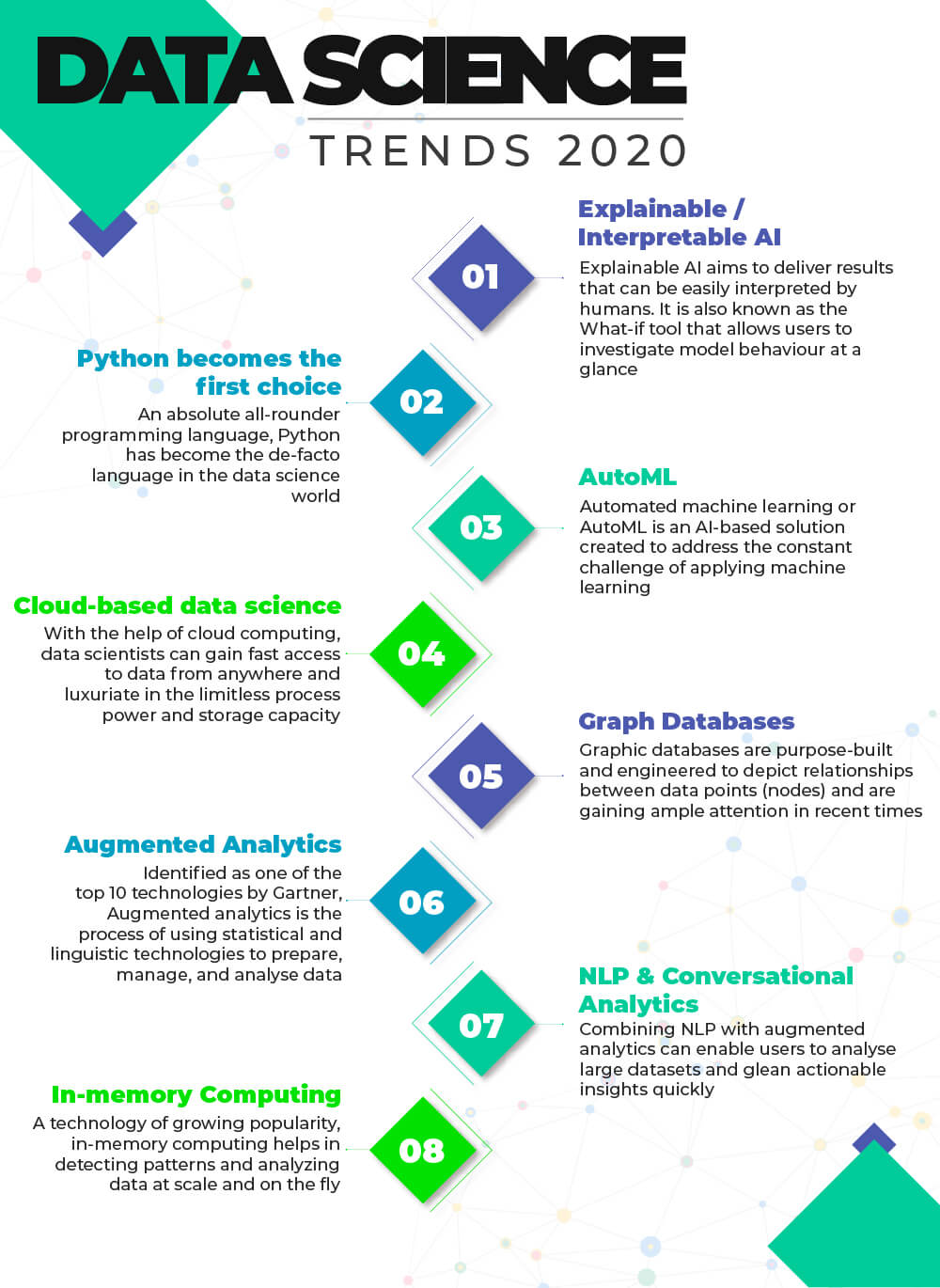

Below is a list of top Data Science Trends to Watch Out.

#1. Explainable / Interpretable AI

Explainable AI aims to deliver results that can be easily interpreted by humans. In technical terms, it refers to the set of tools and frameworks applied to build interpretable and inclusive machine learning models. As one of the hottest topics in the area of machine learning, Explainable AI helps in detecting and resolving bias, drift, and other gaps in data and models. It is also known as the What-if tool that allows users to investigate model behaviour at a glance.

#2. Python becomes the first choice in programming languages

An absolute all-rounder programming language, Python has become the de-facto language in the data science world. The key aspect that differentiates Python from other languages is that it is stacked with integrations for libraries and programming languages. Also, python allows users to dig deeper into large datasets and create quick prototypes for problems.

#3. AutoML

Automated machine learning or AutoML is an AI-based solution created to address the constant challenge of applying machine learning. AutoML refers to the automation of the process of applying machine learning to the problems in the real world. Small-sized and less technical businesses can highly benefit from AutoML. In fact, companies have started investing heavily in the development of AutoML tools and services.

#4. Cloud-based data science

Cloud computing gained momentum in recent years and will continue to do so with AI-driven processes and IoT. The global cloud computing market is expected to grow at a CAGR of 14.9% from 2020 to 2027 (Grandview research). With the help of cloud computing, data scientists can gain fast access to data from anywhere and luxuriate in the limitless process power and storage capacity.

Additionally, as the data science field continues to mature in 2020, on-demand computing has become indispensable to data scientists, particularly with the steady growth of data and processing power.

#5. Graph Databases

Big data is expected to grow at an exponential rate in the upcoming years. Thus, analysing the structured and unstructured data at scale with query tools and traditional databases will be more challenging in this decade. However, graph databases serve as a solution to this dire situation.

Graph databases are purpose-built and engineered to depict relationships between data points (nodes). Graph databases are gaining ample attention in recent times as it offers immense flexibility in representing the data. It also provides powerful data modeling tools. According to Gartner, in the next few years, the application of graph databases will grow at 100% every year.

#6. Augmented Analytics

Identified as one of the top 10 technologies by Gartner, Augmented analytics is the process of using statistical and linguistic technologies to prepare, manage, and analyse data. With the help of augmented analytics, companies can tackle the emerging challenges with the complexity and growth of big data. Garner also posits augmented analytics as the future of data and analytics.

The global augmented analytics market is estimated to grow at a CAGR of 28.4% between 2018 to 2025 and reach $29,856 million by 2025 (Allied Market Research).

#7. NLP & Conversational Analytics

Natural Language Processing (NLP) and Conversational Analytics can dramatically influence the adoption of analytics by every user, regardless of their technical expertise. Combining NLP with augmented analytics can enable users to analyse large datasets and glean actionable insights quickly.

#8. In-memory Computing

In-memory computing is the process of storing information in the main RAM (Random Access Memory) of the servers instead of relational databases. A technology of growing popularity, in-memory computing helps in detecting patterns and analyzing data at scale and on the fly. The drop in memory prices has made in-memory computing economical for various business applications.

Conclusion

Digitization has greatly changed the way businesses research and analyse. As technology continues to advance and companies increasingly invest in digital transformation initiatives, data growth will be constant and behemoth. This will consequently raise the prominence of data science.

Related Tags

Author

SGA Knowledge Team